Stop the Subscription Bleed: Access Kling, Runway, and Veo 3 Directly in Premiere Pro

Lewis Shatel

5 min read

18 nov 2025

Stop the Subscription Bleed: Access Kling, Runway, and Veo 3 Directly in Premiere Pro

Let me paint a picture you probably know too well. You're deep in a cut. The client needs a specific aerial shot of a city at dusk and you don't have it. So you alt-tab to your browser, open Runway, type a prompt, wait 90 seconds, download the file, find it buried in your Downloads folder, rename it something sensible, drag it into your project bin, then finally drop it on the timeline. Then you realize the aspect ratio is wrong. So you do it again.

That whole process took seven minutes. For one clip. And you're paying $35/month for the privilege.

Now multiply that by Kling for motion quality, ElevenLabs for voiceover scratch tracks, and Veo 3 when you need something that actually looks cinematic. You're managing four browser tabs, four separate logins, four billing cycles, and a Downloads folder that looks like a digital landfill. This is the reality of AI-assisted editing in 2025, and it's quietly destroying your flow state and your margin.

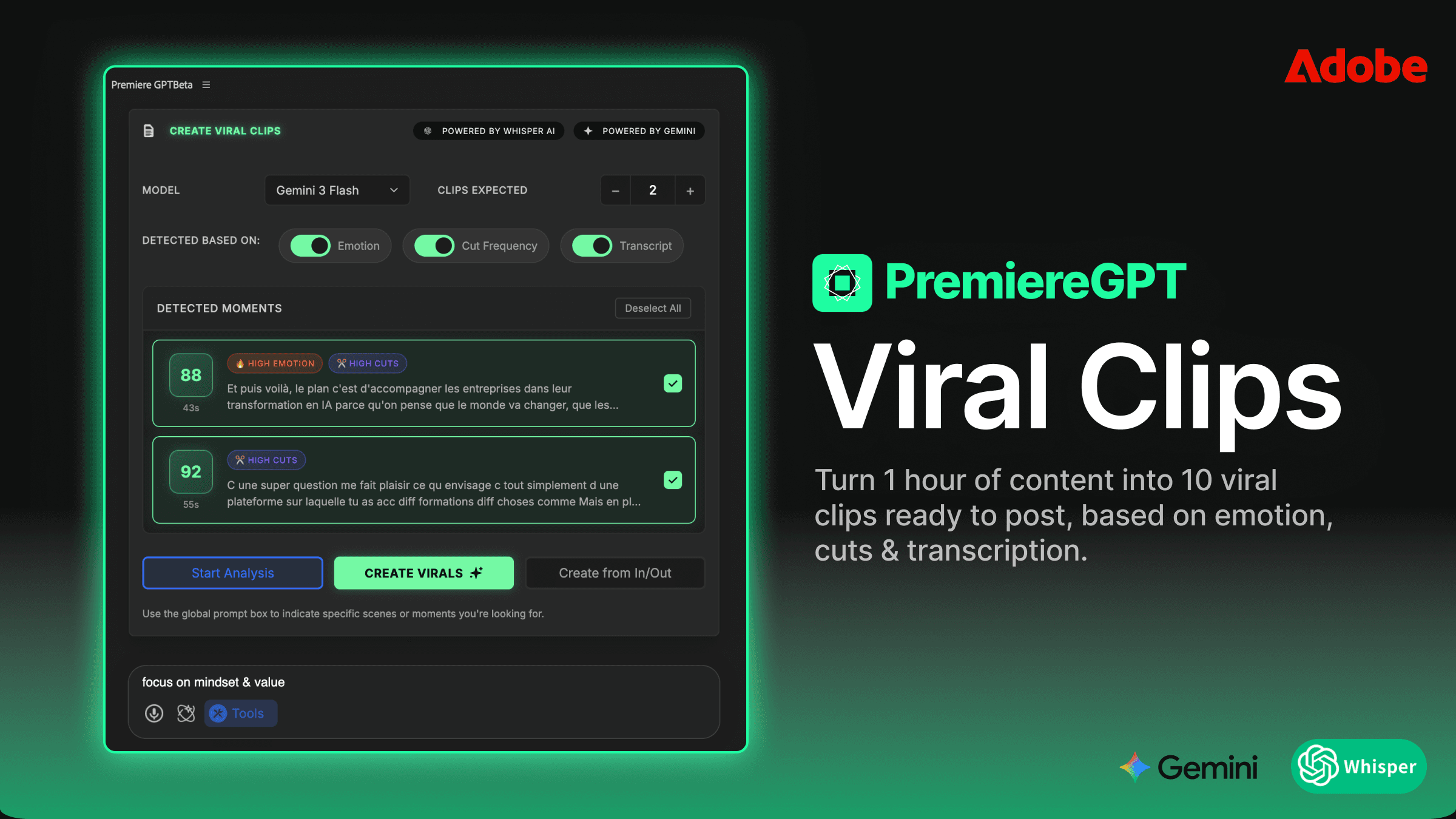

We built the GenAI Hub inside PremiereGPT specifically to kill this problem. Here's exactly how it works and why the math makes it a no-brainer.

The 'Alt-Tab' Tax: Why Browser-Based AI is Killing Your Flow

There's a concept in cognitive science called "context switching cost." Every time you pull your attention away from one task and redirect it to another, your brain pays a penalty. For knowledge workers, that penalty is estimated at up to 40% of productive time. For video editors, it's arguably worse because our work is inherently non-linear and flow-dependent. Breaking concentration to manage a file download isn't just annoying—it's structurally expensive.

The browser-based AI workflow has a specific tax structure. First, there's the generation latency—the time you spend waiting for a model to render your clip while you sit in a different application doing nothing useful. Second, there's the file management overhead—downloading, renaming, and organizing assets that have no automatic connection to your project's bin structure. Third, and most insidiously, there's the context mismatch problem: the AI tool has zero knowledge of your sequence. It doesn't know your frame rate, your color grade, your existing footage's lens characteristics, or the mood of the scene you're cutting. It just generates something generic and you hope for the best.

The round-trip between Premiere and a browser-based tool isn't a minor inconvenience. It's a workflow fracture. And when you're doing it a dozen times per edit session across multiple AI platforms, you're not just losing time—you're losing the mental thread of your edit.

Professional editors who've adopted AI tools heavily report spending 20–30 minutes per session on pure file management. That's time that used to go into pacing, color work in Lumetri, or actually refining the cut. The alt-tab tax is real, it's measurable, and it compounds across every project.

One Panel, Every Model: Breaking Down the GenAI Hub

The GenAI Hub is a native panel inside PremiereGPT that lives in your Premiere Pro workspace exactly like your Lumetri Color panel or your Essential Graphics panel. It doesn't open a browser. It doesn't redirect you anywhere. You stay inside Premiere, full stop.

Inside the panel, you have direct access to the generation engines that actually matter right now: Veo 3 for Google's highest-fidelity video generation, Kling AI for its industry-leading motion coherence and subject consistency, Runway Gen-3 for its mature prompt-following and style control, and NanoBanana for fast, lightweight generation when you need quick B-roll iterations without burning heavy credits.

Each model is accessible from the same interface. You write your prompt once. You select your model from a dropdown. You hit generate. The panel handles the API call, monitors the render queue, and delivers the asset directly into your project. No Downloads folder. No manual import. The clip appears in a designated project bin and, depending on your settings, can drop directly onto a specified V-track in your timeline.

The integration is built at the API level for each provider, which means you're getting the same generation quality as their native web apps—not a degraded or throttled version. When Runway pushes a model update, you get it. When Kling releases a new motion mode, it surfaces in the panel. We're not caching or proxying anything. You're hitting the actual models.

The panel also surfaces model-specific parameters where they matter. Kling's camera motion controls, Runway's style reference inputs, Veo 3's cinematic presets—these aren't flattened into a generic interface. If a model has a parameter worth exposing, it's there.

The Pay-As-You-Go Math: Why Consolidating Credits Beats Subscription Bloat

Let's do the actual math because this is where the conversation gets real.

A professional editor running a typical AI-assisted workflow in 2025 might be subscribed to: Runway Standard at $35/month, Kling Pro at $30/month, ElevenLabs Creator at $22/month, a Storyblocks subscription at $30/month as a fallback, and possibly a Pika or Luma subscription at another $25/month. That's $142/month minimum—and that's before you hit the usage caps on any of them and get prompted to upgrade.

Here's the brutal part: you're not using all of them at full capacity every month. Some months you're heavy on voiceover work and barely touch Runway. Other months you're generating B-roll constantly and ElevenLabs sits idle. But the subscriptions don't care. They bill you regardless.

The PremiereGPT credit system works differently. You buy a pool of credits. Every generation across every model draws from that same pool at a transparent per-generation rate. You use Kling heavily this month? Your credits go toward Kling. Next month you're in a voiceover-heavy project? Same credits, different model. The pool doesn't expire on a monthly billing cycle. You're paying for actual usage, not for the theoretical right to use something.

For a typical mid-volume editor generating maybe 40–60 AI assets per month across different modalities, the credit math consistently comes out 35–50% cheaper than maintaining equivalent separate subscriptions. And that's before you account for the subscription tiers you were over-paying for just to get access to one or two features.

We're also not trying to lock you into PremiereGPT-exclusive models. The credits work against the real models from the real providers. There's no bait-and-switch where the "integrated" version is a lesser product. You're buying access, not a walled garden.

From Prompt to Timeline: The Zero-Click Import Workflow

The import workflow is where the time savings become visceral. Here's what the process actually looks like in practice.

Your playhead is parked on a gap in your sequence. You need a five-second shot of rain hitting a neon-lit street at night to bridge two interview cuts. You open the GenAI Hub panel—it's already docked in your workspace. You type your prompt. You select Kling for the motion quality. You set duration to five seconds. You hit generate.

While generation runs, you keep editing. The panel shows a progress indicator in the corner of your screen. When the clip is ready, it appears in your GenAI Assets bin automatically—no file dialog, no manual import, no Downloads folder archaeology. If you've enabled the auto-place setting, it drops directly onto your V2 track at the playhead position, trimmed to the exact duration you specified.

That's it. You didn't leave Premiere. You didn't touch your file system. The clip is in your timeline, already at your sequence frame rate, ready for a Lumetri grade.

For editors who work with multicam clips or complex track layouts, the auto-place behavior is configurable. You can specify which V-track receives generated assets, whether they drop at the playhead or into the bin only, and whether the system automatically creates a new track if your specified track is occupied. It's not a rigid system—it adapts to how you actually work.

Customizing the Generation: Using AI Context to Match Your Sequence

Generic AI generation is the fastest way to produce footage that looks like it came from a completely different film than the one you're cutting. The lighting is wrong, the motion feel is different, the implied lens characteristics don't match. It sticks out immediately to any trained eye.

The GenAI Hub addresses this through what we call AI Context—a set of parameters derived from your active sequence that get appended to your generation prompt automatically. When you generate from within an active sequence, the system can read your sequence settings and, optionally, analyze the clip at your playhead to extract style descriptors: approximate color temperature, motion blur characteristics, depth of field feel, and dominant color palette.

These descriptors get translated into prompt language and appended to your generation request. If you're cutting a handheld documentary piece with a warm, slightly desaturated grade, the system can automatically append context like "handheld camera movement, warm tungsten color temperature, shallow depth of field, documentary style" to your prompt—without you having to type it manually every time.

You can review and edit the appended context before generating. You can also save context presets for recurring project styles. If you're doing a series of episodes with a consistent visual language, you define the context once and it applies to every generation session for that project.

This doesn't guarantee perfect visual consistency—no AI generation system does right now. But it dramatically reduces the number of regeneration attempts you need before you get something that actually cuts into your sequence without looking jarring. In testing, editors reported needing 40% fewer regeneration attempts when using AI Context versus prompting without sequence awareness.

Future-Proofing Your Edit: Why Being Model-Agnostic Matters

Here's something worth saying plainly: we don't care which AI model wins. Not commercially, not philosophically. Runway might be the best video generation tool today. Kling might leapfrog it next quarter. Veo 4 might make both of them irrelevant by the time you're reading this. That's not our problem to solve, and it shouldn't be yours either.

The single biggest mistake an editor can make right now is building a workflow dependency on one specific AI tool. The model landscape is changing faster than any software product cycle in the history of this industry. Tools that were best-in-class six months ago are already looking dated. Subscriptions you committed to based on last year's benchmarks may be locking you into inferior output.

Model-agnosticism isn't just a feature—it's a professional survival strategy. When you're working inside the GenAI Hub, switching from Runway to Kling to Veo 3 is a dropdown selection. Your prompt, your workflow, your timeline integration—none of it changes. You're not re-learning a new interface. You're not migrating your project to a different platform. You just select the model that produces the best output for your specific shot on that specific day.

We add new models as they become production-viable. When a new generation engine hits the market and proves itself on real editorial work, it goes into the panel. You don't need to sign up for a new service, create another account, or figure out another billing relationship. Your existing credit pool works against it immediately.

This is the actual long-term value proposition: you're not buying access to a specific AI tool. You're buying a stable, permanent interface to whatever the best available AI generation is at any given moment. The models will keep changing. Your workflow doesn't have to.

Stop paying for five subscriptions to tools you're using at 30% capacity. Stop managing a Downloads folder full of AI-generated clips that may or may not be named something useful. Stop alt-tabbing out of your edit to babysit a browser-based render queue. The friction is optional. The subscription bleed is optional. The workflow exists to eliminate both.

Want to stop generating AI sludge? We put together The GenAI Prompt Bible for Editors—a curated PDF of 50+ tested prompts specifically engineered to generate high-quality B-roll, SFX, and voiceover assets that actually cut into a professional timeline without looking like they came from a demo reel. These aren't generic "a cinematic shot of..." prompts. They're structured, model-specific, and tested against real editorial use cases. Grab the free PDF and stop guessing.